To learn more about storage and memory for AI, register for our free webinar, May 29th at 1PMCST titled The Importance of Storage in AI Infrastructure here.

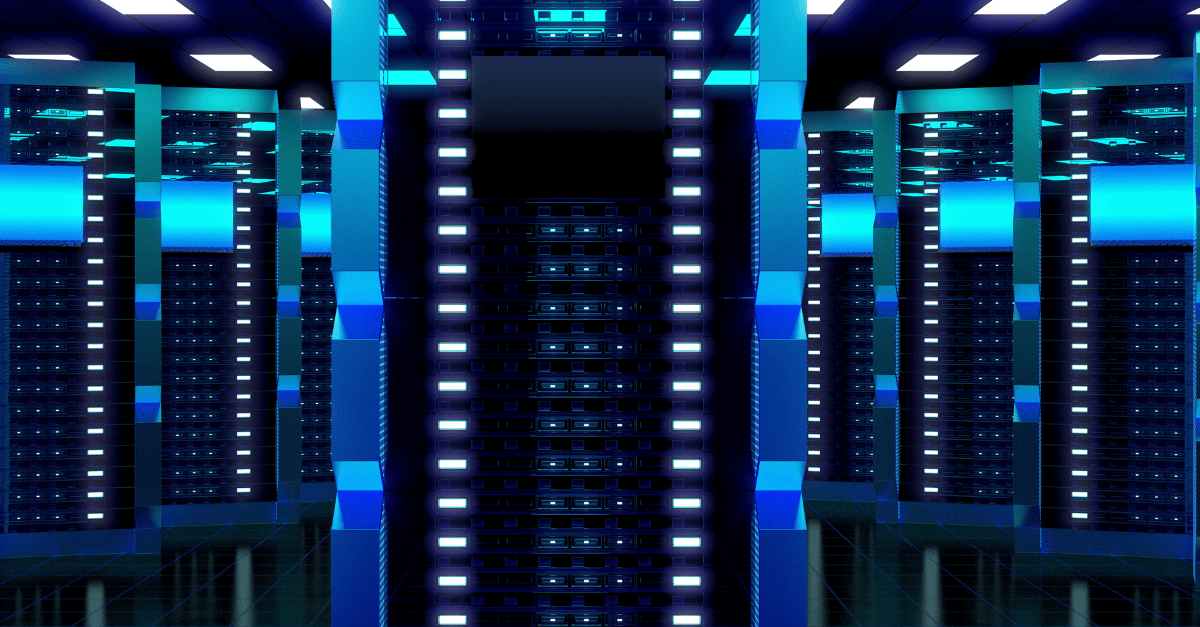

As the data center world scrambles to get a handle on the new horizon of AI deployments, part of this push is to determine new ways of designing and deploying racks that will serve this new purpose. In the midst of this process, it’s important to keep in mind that the efficiency of AI is not solely the job of algorithms or computing power alone. At ECS we understand that the work of AI also heavily relies on the foundations of memory and storage within data centers. Let’s take a look at why memory and storage are so important, why they are often overlooked, and what you can do to get ahead in this area through the help of Micron Technologies and the ECS innovation lab.

Optimizing AI Hardware

Choosing the right servers, of course, is always crucial for a new deployment. With AI, though, ensuring you have the right drives for the job is more important than ever. This is true for two main reasons. Firstly, local storage cache solutions play a significant role in accelerating data access to GPUs. Networked data lakes, if not powered by strong, capacity-focused storage, cannot ensure that AI servers are adequately fed with necessary data, creating bottlenecks. In this way, your storage setup is responsible for maintaining a continuous and efficient AI operational flow.

The second reason the right servers are so vital to AI deployments is that, for AI use cases, local storage acts as a cache for feeding training data into the High Bandwidth Memory (HBM) on the GPU. Consequently, a server with advanced memory solutions, designed for delivering high throughput, is critical in enhancing AI applications’ performance — from speeding up image classification in computer vision to improving accuracy in voice recognition in natural language processing environments.

Overall, when it comes to setting up cabinets meant for AI use cases, utilizing advanced memory and storage hardware can significantly reduce the time required to train AI models, lower computing costs, and enhance the accuracy of AI inferencing across vital use cases such as computer vision and natural language processing. That’s why ECS has partnered with Micron to provide the opportunity for users to explore AI with the help of the best in high-performance servers.

The Overlooked Importance of Memory

The significance of storage and memory in AI applications is critical, yet often understated. Although CPUs and GPUs garnish much of the attention, it’s servers that play pivotal roles in how swiftly AI models are trained, and the accuracy with which AI inferencing occurs. While CPUs and GPUs handle the bulk of the computational load, their efficiency is contingent upon how quickly and consistently they can access data. As ECS’s experiments alongside at Micron in our innovation center have proven, if data ingestion lags, it can slow down the training process, causing not only delays but also increased costs due to underutilized resources.

There are a few use cases that ECS has looked at in this regard. For instance, in AI’s application to computer vision — used in facial recognition and tracking theft — fast, high-bandwidth, and low-latency memory are crucial. It’s the only way to ensure that massive volumes of data are processed swiftly enough to allow real-time applications to function effectively. Similarly, in natural language processing, efficient data flow is essential to prevent backlogs that can delay response times, impacting the performance of AI-driven personal assistants.

Testing Your Memory

Solutions based around high-powered hardware like solid state drives (SSD) and dynamic random access memory (DRAM) can be tricky. It’s important to get it right, and data centers should ensure that they are optimizing their configurations and mitigating risk. One avenue to accomplish this is through testing an AI solution in an innovation lab before deploying it in a real-world environment. That way, storage and memory can be rigorously tested under different scenarios and workloads before any purchase is made or any approach is committed to. It’s the very reason that the ECS innovation lab exists.

Here at ECS we take these concepts further, too. Beyond just performance validation, testing AI storage and memory solutions also creates opportunities for innovation within the deployment. For instance, testing newer technologies (like, for example, the latest AI accelerators) against pre-existing solutions allows you to assess the tangible benefits they offer over previous generations. Experimenting with new combinations and configurations in innovation labs can lead to breakthroughs in AI deployment and performance, a place where valuable and tangible competitive advantages can be developed. Alongside our partners at Micron, these experiments are exploring new frontiers in AI workloads to discover the best setups for a new, data-rich future in the world of computing.

Strength In Storage

At ECS, we believe that storage and memory are not just supporting AI players. They are central to the successful deployment and scaling of AI technologies. For those looking to future-proof their AI applications, investing in and understanding the right memory and storage solutions is paramount, as they are foundational elements not just for operational functionality, but future scalability too.

As AI continues to evolve, the innovation and testing in our state-of-the art innovation lab will lead the way in shaping the future of AI applications, making an educated choice in storage and memory solutions all the more critical. After all, this is where experimenting with different memory and storage configurations—powered by the very best in hardware from Micron—can yield optimizations that push AI applications from the experimental stage to real-world scalability and reliability. When you’re investing in an AI deployment, commit it to memory.